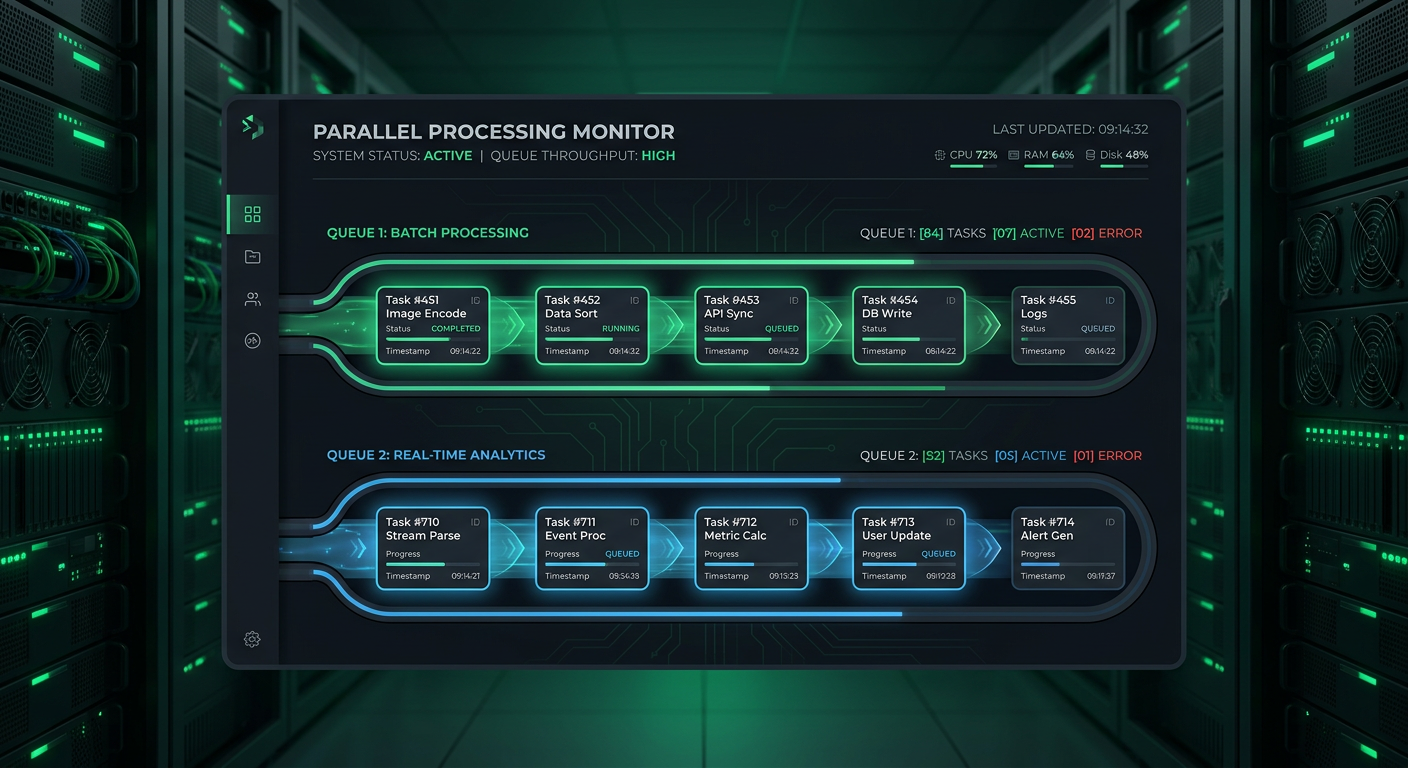

Dual-Queue Task System

Zero-infrastructure background processing with dual-queue architecture handling heavy GEE analysis alongside lightweight tasks without Redis or Celery.

Key Results

Client

Enterprise SaaS Client

Industry

Sustainability & ESG

Location

Europe

Overview

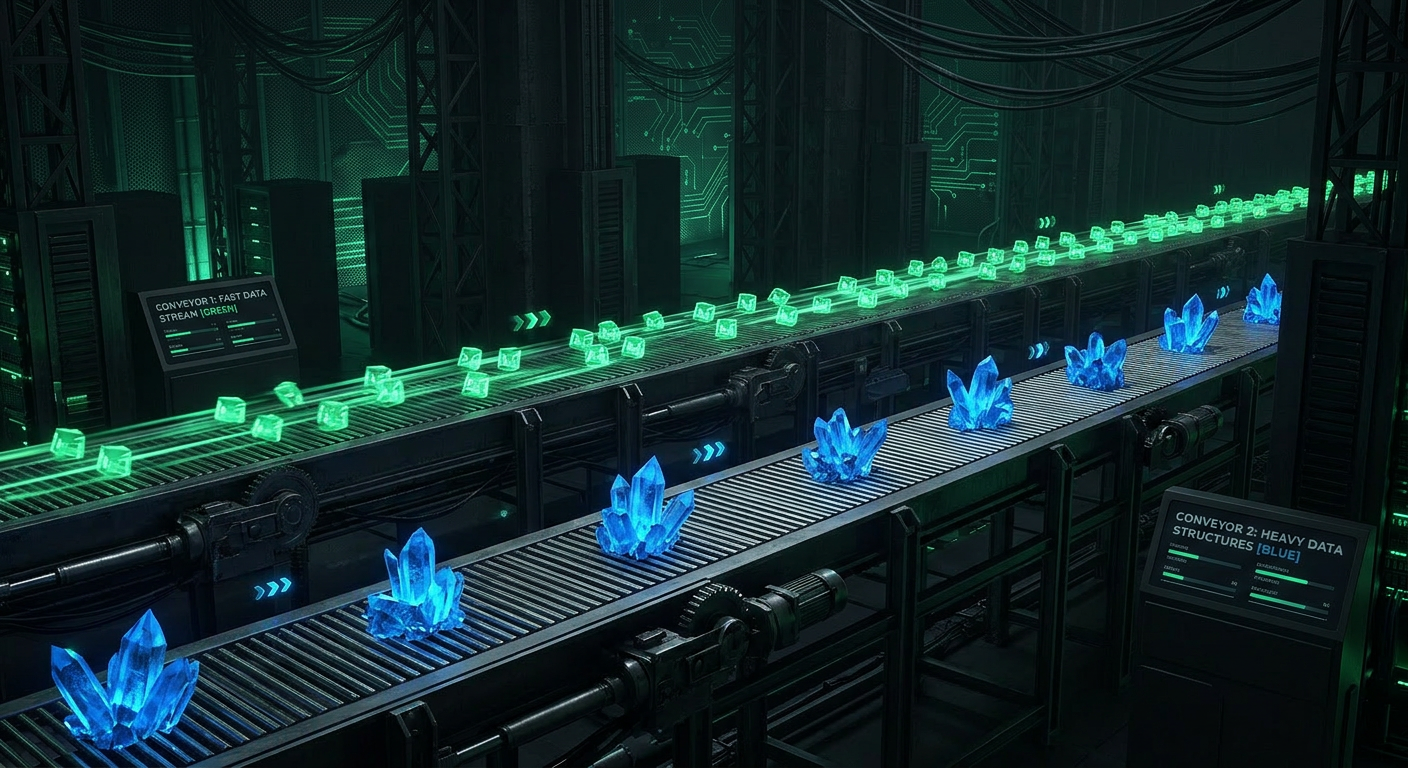

Sustainability platforms run heavy workloads—Google Earth Engine analysis, climate risk calculations, AI-powered gap analysis—alongside lightweight tasks like sending emails. The platform needed to run both without blocking web requests, but adding Celery and Redis would increase operational complexity for a single-instance Azure deployment.

We built an in-process dual-queue system that keeps heavy work from blocking time-sensitive operations, all without adding Redis, Celery, or any external infrastructure. The architecture uses dedicated worker pools running in the same Django process with separate queues for general tasks and site processing.

Architecture Overview

The Challenge

Mixed Workloads

A 30-second Earth Engine analysis shouldn’t delay a password reset email that needs to go out immediately. The system needed workload isolation without separate processes.

Infrastructure Constraints

The deployment model was a single Azure App Service instance—no room for separate worker processes or message brokers.

Operational Visibility

Engineers needed to monitor queue depth, worker health, and error rates without external tooling or additional services.

Our Solution

Architecture Overview

General Task Queue

2 worker threads for lightweight ops

Site Processing Queue

10 daemon threads for heavy lifting

Debounced Indexing

15-second timer batches requests

General Task Queue

A standard library queue with 2 worker threads handles lightweight operations—email sends, company-to-taxonomy matching, notifications. Tasks are submitted as function + payload pairs and execute in a shared thread pool.

Site Processing Queue

A separate queue with 10 dedicated daemon threads handles heavy lifting—GEE processing, biodiversity risk calculations, AI autofill, gap analysis. Each worker runs a tight loop, pulling callables and executing them independently.

Debounced Indexing

After file uploads, a 15-second timer batches indexing requests. Rapid uploads don’t trigger redundant indexer runs—only one run fires after the burst settles.

Task Statistics Endpoint

A /background-tasks/status/ endpoint exposes queue sizes, completion counts, error rates, and timestamps under a thread-safe lock, providing full operational visibility.

Performance Metrics

Transaction Throughput

Response Time Distribution

0

External Deps

12

Worker Threads

100%

Task Isolation

15s

Debounce Window

Technology Stack

Backend

- Django

- Python 3.11

- Gunicorn

Infrastructure

- Azure App Service

- PostgreSQL

- Single Instance

Processing

- Threading

- Queue Module

- Google Earth Engine

Outcomes & Impact

Operational Impact

- Password reset emails send within seconds, even during heavy GEE processing batches

- Zero external dependencies—no Redis, no Celery, no message broker to manage

- Operations team monitors queue health through a single status endpoint

Technical Achievements

- Queue isolation ensures email delivery isn’t delayed by long-running GEE jobs

- All workers are daemon threads—process exits cleanly without explicit shutdown

- Tasks submitted as callables with arguments—no job serialization needed

Infrastructure Simplicity

- Deployment remains a simple single-instance App Service

- No worker process configuration or broker management required

- Thread-safe statistics endpoint provides full visibility into queue health

“The engineering team delivered a robust, production-ready solution that exceeded our expectations. The dual-queue system handles our complex workloads seamlessly without any additional infrastructure overhead.”

Engineering Director

Enterprise Client

Related Case Studies

Key Vault Secrets Management

Azure Key Vault as single source of truth for 40+ secrets with CI sync, managed identity auth, and file-based secret support.

CI/CD Pipeline with Auto-Migrate

Automated deployment pipeline with secret sync, deterministic startup, post-deploy health checks, and zero manual steps.

Security Middleware Stack

Defense-in-depth request hardening with size limits, injection logging, brute-force tracking, and rate limiting—all without external services.

Ready to build something similar?

Let's discuss how we can apply the same engineering excellence to your project.